Artificial Intelligence (AI), simply put, is a branch of computer science that develops computers that can perform tasks normally requiring human intelligence. When people think of AI, robots that can think like humans (and might want to destroy humanity) often come to mind due to the prevalence of sci-fi movies such as The Terminator, I, Robot and Avengers: Age of Ultron. However, most AI researchers are focused less on producing humanoid robots and more on developing intelligent systems that make people’s lives easier (or not). Read on to find out how emergent AI is having a huge impact on our society and whether we are ready for it.

Artificial Narrow Intelligence (ANI)

Sometimes referred to as Weak AI, Artificial Narrow Intelligence is AI that specializes in specific human tasks. The emergence of narrow AI dates back to 1939, when the relay-based Elektro robot, built by Westinghouse, responds to the rhythm of voice commands and delivers pre-recorded wisecracks. Since then, the world has seen the presence of “weak” or “narrow” AI systems everywhere. From Apple Siri to Facebook Friend Recommendations, it is in our car navigation, traffic control and airport immigration.

Google is a major player in the field of AI. In May 2013, Google and NASA teamed up to create a quantum computer that is able to find complex patterns in information in order to determine creative outputs, a process called machine learning. “Machine learning is all about building better models of the world to make more accurate predictions,” Google noted in a blog post “If we want to cure diseases, we need better models of how they develop. If we want to create effective environmental policies, we need better models of what’s happening to our climate. And if we want to build a more useful search engine, we need to better understand spoken questions and what’s on the web so you get the best answer.”

Artificial General Intelligence (AGI)

As of now, we are still far from creating a strong AI that can perform any intellectual task that a human being can. Nevertheless, that remains a frightening possibility as it means they would possibily surpass us and conquer humans. If that would be the case, “the development of full artificial intelligence could spell the end of the human race,” famed physicist Stephen Hawking told the BBC. Billionaire entrepreneur Elon Musk, once tweeted, “We need to be super careful with AI. Potentially more dangerous than nukes.”

Responding to this potential threat, AI experts around the globe are signing an open letter issued by the Future of Life Institute that pledges to safely and carefully coordinate progress in the field to ensure it does not grow beyond humanity’s control. A research document attached to the open letter outlines potential pitfalls and recommends guidelines for continued AI development.

The Opportunities

The reason AI is always a meaty discussion is that it offers many benefits to people’s lives.

Improved AI will lead to significant productivity advances as machines perform better – at certain tasks than humans. There is substantial evidence that self-driving cars will reduce collisions, and resulting deaths and injuries, from road transport, as machines avoid human errors, lapses in concentration and defects in sight, among other problems. Intelligent machines, having faster access to a much larger store of information, and able to respond without human emotional biases, might also perform better than medical professionals in diagnosing diseases.

Machines will replace humans in many tedious, laborious, dangerous, and strenuous jobs. For example we can send machines to one way trips to the space, let them work in mines, or work as rescuers. We do not need to worry about harming their lives because machines are not “alive” to start with.

it might lead to a society in which people have greater freedom and greater incentive to concentrate on what is most fully human such as developing our interpersonal relations with family and friends or play sports to keep fit. Similarly, the new technology might make it possible for many more people to engage in social activities in education, health, recreation, and welfare.

The Challenges

Many people fear that in developing AI, we may be sowing the seeds of our own destruction, our own physical, political, economical, and moral destruction.

Political destruction could result from the exploitation of AI (and highly centralized telecommunications) by a totalitarian state. If AI research had developed programs with a capacity for understanding text, understanding speech, interpreting images, and updating memory, the amount of information about individuals that was potentially available to government would be enormous.

Economic destruction might happen too if changes in the patterns and/or rates of employment are not accompanied by radical structural changes in industrial society and in the way people think about work. New ways of defining and distributing society’s goods will have to be found. At the same time, our notion of work will have to change.

Moral desruction comes when our legal systems cannot assign blame when there is an accident involving a self-driving car, or whether the use of autonomous weapons in war conflict with basic human rights.

The Verdict

Will artificial intelligence make human society a utopian dream or a dystopian nightmare? Will our descendants honor us for making machines do things that human minds do or critcize us for irresponsibility and hubris? Will human race come to and end or advance to a higher level?

The key is to be aware of the benefits and the risks of AI development. The future outcome will depend largely on social and political factors. It is important that the public and politicians of today should know as much as possible about the ambivalence of AI.

The matter needs careful analysis, with technical AI experts, legal experts, economists, policymakers, and philosophers working together. And as this effects society at large, the public also needs to be represented in the discussions and decisions that are made.

What do you think?

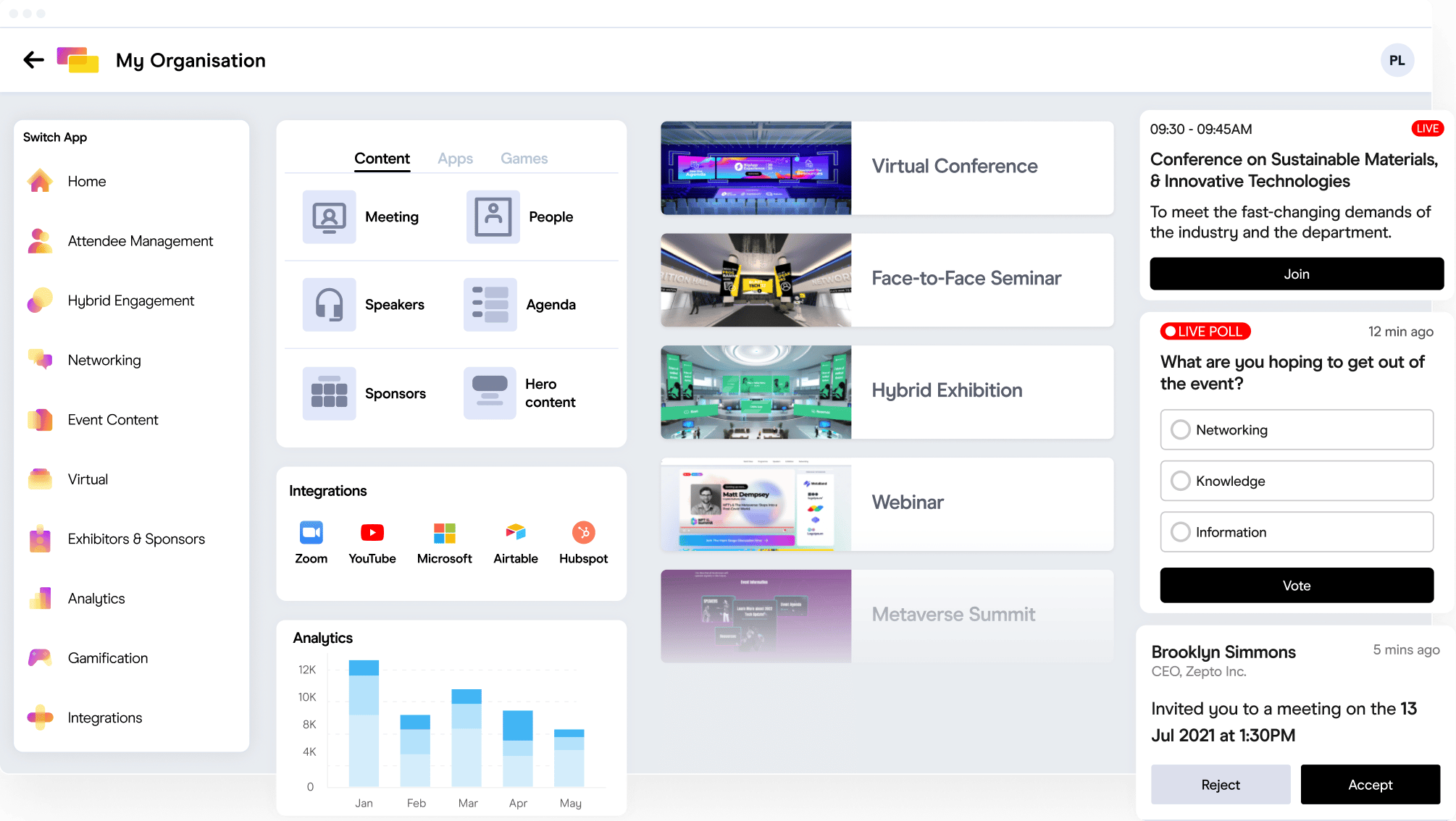

Is our society ready for Artificial Intelligence? Why or why not? And how does it affect the events industry? Let us all know your thoughts in the comments!